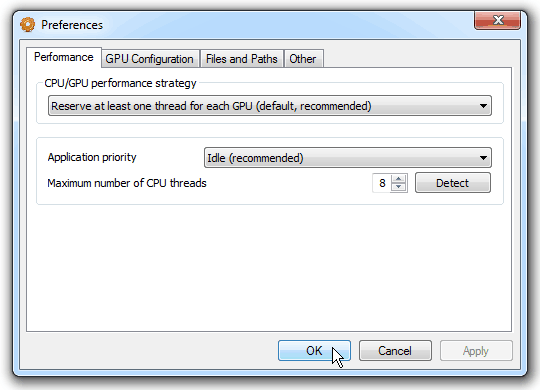

CPU/GPU performance strategy. GPU devices does not working totally separate from CPU. At least one CPU thread is active for each GPU core providing input data, GPU kernel synchronization and data post procession. Waiting for GPU kernel completion is not a very resource consuming task but it still counts. For super fast GPUs missing exact moment when GPU kernel finished its job by just several milliseconds can ends in tens of percents performance drops. So for this situation we'll want to pull GPU status as many times as possible even meaning that one CPU core will be unavailable to "computations" tasks. For slow GPUs, however, we may want to use all CPU cores to computations as well thus sharing "computations" core(s) with GPU kernel synchronization cores (as missing several milliseconds here won't affect performance that much).

Several strategies are available:

Application priority. You are able to determine the number of system resources (mostly the processor time) used by the program during the operation:

Maximum number of CPU threads. The program automatically determines the number of processor cores and creates the corresponding number of threads. That can also be adjusted manually. This value defines only number of "computational" CPU threads not GPU synchronization ones.